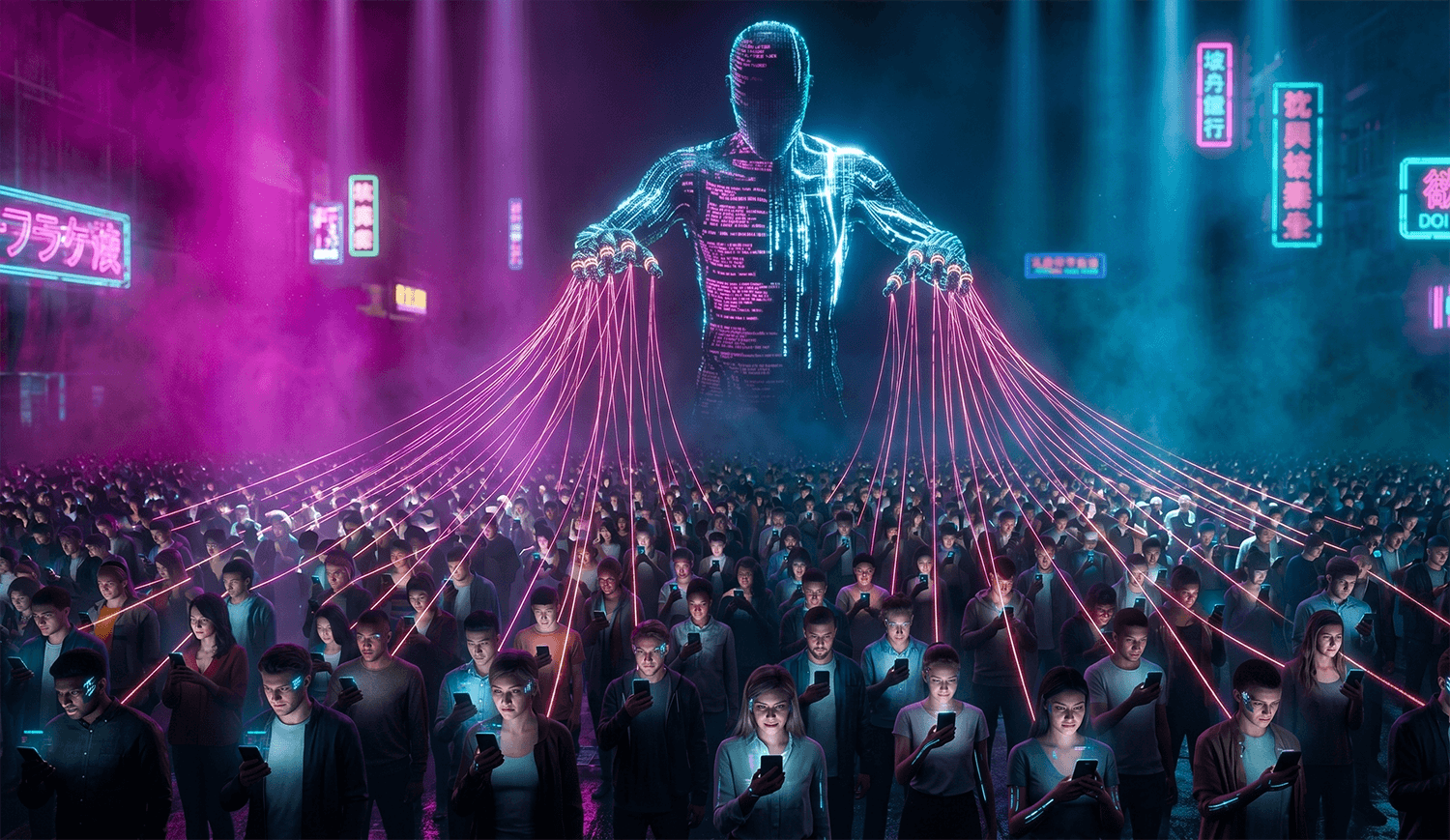

Fintech security experts are sounding a five-alarm warning: industrial-scale scam compounds powered by artificial intelligence are now pulling in more illicit money than the entire global narcotics trade. What was once a cottage industry of lone email phishers has evolved into a sprawling, cross-border enterprise employing tens of thousands of human operators backed by generative AI, deepfake tools, and automated money-laundering rails. The United Nations and regional fintech leaders estimate losses in the trillions of dollars globally, with ordinary Americans increasingly finding themselves in the crosshairs of scripts that sound eerily human, look visually convincing, and move faster than traditional fraud controls can respond.

If you have received a flirty text from a 'wrong number,' a LinkedIn message promising a crypto mentorship, or a video call from someone who looked exactly like your boss, you have likely brushed up against the edge of this machine. Here is how the new generation of AI-driven scam factories operates, and what you can do to shut the door on them.

How the AI-Powered Scam Factory Works

The operation usually begins inside a fortified compound in Southeast Asia, West Africa, or Eastern Europe, where trafficked or recruited workers sit in shifts at dozens of laptops. Each operator runs multiple fake identities simultaneously. Instead of typing every message themselves, they rely on large language models fine-tuned on romance, investment, and customer-support scripts. The AI drafts flirtatious replies, translates them into fluent English, Spanish, Mandarin, or German within seconds, and remembers the 'personality' it is supposed to project across weeks of conversation.

Targeting is also automated. Scam syndicates buy breached contact lists and use AI to score potential victims, widowers, recent retirees, crypto-curious professionals, for emotional vulnerability and wallet size. Once a target responds, the system escalates. Voice-cloning software can replicate a loved one's voice from a three-second TikTok clip. Deepfake video tools place a scammer's face onto a 'verified' financial advisor on a Zoom call. AI-generated trading dashboards show fake portfolio gains in real time, luring victims into so-called 'pig butchering' investment schemes that can drain retirement accounts over months.

The final stage is industrialized laundering. Stolen funds move through crypto mixers, shell company bank accounts, and money mules recruited through fake 'remote work' job postings. Because the AI can run 24/7 across dozens of targets per operator, the economics are staggering: a single compound can generate hundreds of millions of dollars per year, and the largest networks now eclipse the estimated $600 billion global illegal drug market.

Red Flags to Watch For

- A stranger's text, dating app match, or social media DM that quickly pivots from small talk to crypto trading, forex, or gold investing

- Any video call, voice note, or 'urgent' message from a relative, executive, or government official asking you to move money, buy gift cards, or confirm a code, verify on a known number before acting

- Investment platforms you cannot find reviewed on the SEC, FINRA, or CFTC websites

- Profile photos that look too polished, or reverse-image searches that return model stock photos

- Contacts who refuse in-person meetings but provide endless 'proof' screenshots

- Pressure to keep the relationship or investment secret from family, your bank, or 'jealous' friends

- Withdrawal requests suddenly blocked behind new 'taxes,' 'unlock fees,' or 'compliance deposits'

- Grammar that is unnaturally perfect across multiple languages, or emotional responses that arrive within seconds around the clock

Real Victim Report

One Naperville, Illinois resident reported to the FTC that she began chatting with a man on a language-learning app who claimed to be a Singapore-based architect. Over four months, AI-assisted conversations and a convincing deepfake video call persuaded her to move $214,000 into a crypto 'arbitrage' platform that showed daily gains. She only realized it was a scam when the site demanded a $38,000 'IRS clearance fee' before she could withdraw, by then the wallet addresses had already emptied into an overseas mixer.

What To Do If You've Been Targeted

- Stop all communication immediately. Do not send a 'final message,' do not try to recover money by paying a fee, and do not click any further links.

- Do not send more money or personal data, even if the scammer claims you need to pay taxes, fees, or 'unlock' your balance, this is always a secondary scam.

- Report the incident to the Federal Trade Commission at reportfraud.ftc.gov with screenshots, wallet addresses, phone numbers, and the platform name.

- File a complaint with the FBI's Internet Crime Complaint Center at ic3.gov, this is the agency that coordinates cross-border takedowns of scam compounds.

- Contact your bank, credit card issuer, or crypto exchange right away to request a reversal, chargeback, or account freeze. Time matters; some wires can still be recalled within 24 to 72 hours.

- Place a free fraud alert and consider a credit freeze with Equifax, Experian, and TransUnion to block new-account fraud.

- If you shared your Social Security number, driver's license, or banking credentials, enroll in an identity protection service such as Aura (aura.com/recentscam) to monitor your SSN, bank accounts, and the dark web for misuse.

How To Protect Yourself Going Forward

- Establish a private 'safe word' with close family members so voice-cloning calls can be instantly verified.

- Never take investment advice from someone you met online and have not met in person. Legitimate advisors are registered, check brokercheck.finra.org before sending a dollar.

- Slow down. AI scams weaponize urgency; a 24-hour pause before any wire, crypto transfer, or gift-card purchase defeats the vast majority of these schemes.

- Lock down your digital footprint: make social profiles private, limit voice and video posts, and use unique passwords with a reputable password manager.

Frequently Asked Questions

Can I really tell a deepfake video call from a real one? ▼

Is the money recoverable if I sent crypto to a scam factory? ▼

Why do these scams feel so personal and emotionally convincing? ▼

Written By

Daniel spent 11 years investigating wire fraud and elder exploitation before moving to consumer education. He still thinks like an investigator.